Designing Data Intensive Applications: Ch5. Replication (Part 3: Multi-Leader Replication)

In the previous article we discussed some of the main side effects that result from having a replicated system, mainly originating from the possibility of having a lag in replication. And tried to enforce some consistency guarantees, like for example, being able to read your own writes, being able to serve monotonic and consistent prefix reads. Then we discussed some solutions for replication lag in modern database systems.

In this article, we will shift our attention to a different paradigm in replication in which we have multiple leaders in the replicated system, which is called Multi-Leader Replication. We will explore the use cases that require multi-leader replication such as, replication over multiple datacenters, offline client applications and collaborative editing applications. Then we will discuss the main problems that arise from multi-leader replication, mainly, writes conflicts and go through some of the approaches that can be used for conflict resolution such as, synchronous conflict resolution, conflict avoidance, convergent and custom conflict resolution and some approaches for automatic conflict resolution such as conflict-free replicated datatypes (CRDTs) (2-way merge), mergeable persistent data structure (3-way merge) and operational transformation. Then finally, we will go over some of the famous topologies for multi-leader replication such as, circular, star topologies and even more dense topologies like all-to-all.

Multi-Leader Replication

So far in the previous articles we have considered replication architectures using a single leader. Although that is a common approach, there are interesting alternatives.

Leader-based replication has a major downside which is the fact that all writes has to go to a leader node. In single-leader replicated systems, this downside is even more amplified by the fact that those types of systems has a single leader. That means that if this leader is down for any reason, all writes will fail, which is considered a single point of failure.

A natural extension of the leader-based replication model is to allow more than one node to accept writes. Replication still happens in the same way: each node that processes a write must forward that data change to all the other nodes. We call this a multi-leader configuration (also known as master–master or active/active replication). In this setup, each leader simultaneously acts as a follower to the other leaders.

Use Cases for Multi-Leader Replication

Multi-datacenter operation

Sometimes we want to deploy a database to multiple datacenters in different geographical locations in order to be closer to where the users are and to be able to tolerate an entire datacenter failure. With a normal leader-based replication setup, the leader has to be in one of the datacenters, and all writes must go through that datacenter.

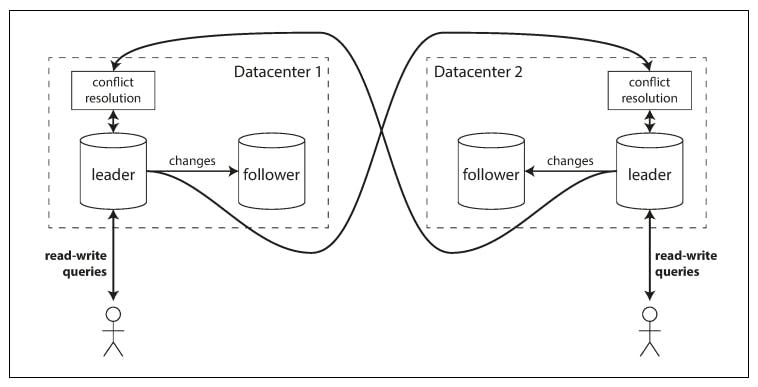

In a multi-leader configuration, we can have a leader in each datacenter. The following diagram shows an example of a multi datacenter database.

As we we can see here, this database is deployed in 2 datacenters. Each of the datacenters has a leader and a follower. So what happens here is that writes go to the leader of the target datacenter which replicates it's change to the follower within the same datacenter and also replicates changes to the leader in the other datacenter.

Now let’s compare how the single-leader and multi-leader configurations fare in a multi- datacenter deployment:

Performance

In a single-leader configuration, every write must go over the internet to the datacenter with the leader. This can add significant latency to writes which might diminish the usefulness of having multiple datacenters in the first place. On the other hand, in a multi-leader configuration, every write can be processed in the local datacenter and is replicated asynchronously to the other datacenters. Thus, the inter-datacenter network delay is hidden from users.

Tolerance of datacenter outages

In single leader replicated systems, the leader is located in on the datacenters. If a failure in that datacenter happens and it goes offline, the system will not be able to process any writes until a failover to another node (in a different datacenter) can happen. While in a multi-leader configuration, each datacenter can continue operating independently of the others, and replication catches up when the failed datacenter comes back online.

Tolerance of network problems

Traffic between datacenters usually goes over the public internet, which may be less reliable than the local network within a datacenter. A single-leader configuration is very sensitive to problems in this inter-datacenter link, because writes are made synchronously over this link. A multi-leader configuration with asynchronous replication can usually tolerate network problems better: a temporary network interruption does not prevent writes being processed.

Despite all the aforementioned advantages of a multi-leader replication systems, it's usually considered a dangerous territory that should be avoided if possible. There are lot of problems that happen like for example, the same set of data can be written or modified on two different leaders at the same time which causes what's called a Write Conflict (we will discuss later on). Also, multi-leader replication is often retrofitted into database systems (few database systems have it by default or enable it via 3rd party actors), which causes this type of replication to behave unexpectedly with some database features, like for example, autoincrementing keys, triggers, integrity constraints, etc.

Clients with offline operation

Another use case for a multi-leader replication system is when your system needs to continue to function even when it's offline. To understand this better, let's take the following example:

If you own an iPhone, an iPad and a Macbook for example and you're using the calendar app. You should be able to view your upcoming meetings (make read requests) even when you're offline. You should also be able to add more meetings (make writes requests) when you're offline as well. And then you go online, those meetings should be synced to your other devices, so if you do that on your iPhone while you're offline, you should be able to view your recent changes (done while offline) on your mac and iPad when you go back online.

In this case, every device has a local database that acts as a leader (it accepts write requests), and there is an asynchronous multi-leader replication process (sync) between the replicas of your calendar on all of your devices. The replication lag may be hours or even days, depending on when you have internet access available.

From an architectural point of view, this setup is essentially the same as multi-leader replication between datacenters, taken to the extreme: each device is a “datacenter,” and the network connection between them is extremely unreliable.

Collaborative editing

Real-time collaborative editing applications allow several people to edit a document simultaneously. For example, Google Docs, allow multiple people to concurrently edit a text document or spreadsheet.

Collaborative editing is not usually thought of as a database replication problem, but it has a lot in common with the previously mentioned offline editing use case. When one user edits a document, the changes are instantly applied to their local replica (the state of the document in their web browser or client application) and asynchronously replicated to the server and any other users who are editing the same document.

If we want to guarantee that there will be no editing conflicts, the application must obtain a lock on the document before a user can edit it. If another user wants to edit the same document, they first have to wait until the first user has committed their changes and released the lock. This collaboration model is equivalent to single-leader replication with transactions on the leader.

However, for faster collaboration, we may want to make the unit of change very small (a single keystroke) and avoid locking. This approach allows multiple users to edit simultaneously, but it also brings all the challenges of multi-leader replication, including requiring conflict resolution.

Handling Write Conflicts

The biggest problem with multi-leader replication is that write conflicts can occur, which means that conflict resolution is required.

To understand this better, let's consider the following example:

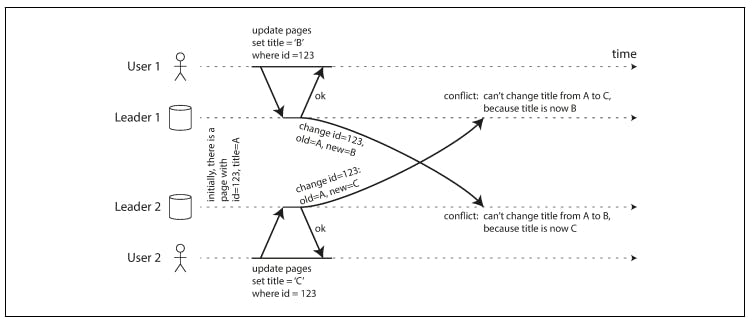

Assume we have a collaborative page with title initially being "A". As we see in the diagram, we have a multi-leader replicated system that has 2 leaders, 1 and 2. User1 sends an update request to change the title of the page from "A" to "B". Meanwhile, user2 sends a write request as well, that's processed by a different leader, to also change the title of the page, but from "A" to "C".

The problem here is that each user is communicating with its own leader, so both write requests, albeit contradictory, will be accepted. And then at a later stage, when both leaders asynchronously replicate their state to each other, a conflict will be detected.

In a single leader system, those writes will go to the same leader and conflicts can be handled more promptly, like for example, failing one of the writes and asking the user to retry, or even accepting the last update because writes on a single leader can be ordered. But in multi-leader system, this conflict is discovered at a late stage.

So how can conflicts be resolved in a multi-leader system?

Synchronous conflict detection

The first, and very obvious and actually useless method is synchronous conflict detection. In principle, you could make the conflict detection synchronous—i.e., wait for the write to be replicated to all replicas before telling the user that the write was successful. However, by doing so, you would lose the main advantage of multi-leader replication: allowing each replica to accept writes independently. If you want synchronous conflict detection, you might as well just use single-leader replication.

Conflict avoidance

The simplest strategy for dealing with conflicts is to avoid them: if the application can ensure that all writes for a particular record go through the same leader, then conflicts cannot occur.

For example, in an application where a user can edit their own data, you can ensure that requests from a particular user are always routed to the same datacenter and use the leader in that datacenter for reading and writing.

However, sometimes you might want to change the designated leader for a record— perhaps because one datacenter has failed and you need to reroute traffic to another datacenter, or perhaps because a user has moved to a different location and is now closer to a different datacenter. In this situation, conflict avoidance breaks down, and you have to deal with the possibility of concurrent writes on different leaders.

Converging toward a consistent state

A single-leader database applies writes in a sequential order: if there are several updates to the same field, the last write determines the final value of the field.

In a multi-leader configuration, there is no defined ordering of writes, so it’s not clear what the final value should be. In the previous example, at leader 1 the title is first updated to B and then to C; at leader 2 it is first updated to C and then to B. Neither order is “more correct” than the other.

If each replica simply applied writes in the order that it saw the writes, the database would end up in an inconsistent state: the final value would be C at leader 1 and B at leader 2. That is not acceptable—every replication scheme must ensure that the data is eventually the same in all replicas. Which means that the database should always resolves conflicts in a convergent way.

There are various ways of achieving convergent conflict resolution:

- Give each write a unique ID (e.g., a timestamp, a long random number, a UUID, or a hash of the key and value), pick the write with the highest ID as the winner, and throw away the other writes. If a timestamp is used, this technique is known as last write wins (LWW). This approach implies data loss (because one value will be discarded).

- Give each replica a unique ID, and let writes that originated at a higher-numbered replica always take precedence over writes that originated at a lower- numbered replica. This approach also implies data loss.

- Somehow merge the values together—e.g., order them alphabetically and then concatenate them, in our example, the title would be "B/C". This keeps both values but the final result might not make sense to the user.

- Record the conflict in an explicit data structure that preserves all information, and write application code that resolves the conflict at some later time (perhaps by prompting the user). This like your git conflicts, non of the code is thrown away, and you're prompted to resolve those conflicts manually via some application logic.

There are distributed databases like for example, Google Spanner, that has this kind of configuration and very tough guarantees like, external consistency. External consistency means, that although transactions are concurrent and distributed, they would appear to be executed at the same global order they were initiated at with respect to some time reference. For example, if we have 2 transactions \(T_1\) and \(T_2\) and \(T_1\) commits before \(T_2\) starts, then this means that \(T_1\) commit timestamp must be smaller than \(T_2\)'s.

It uses a complicated API called TrueTime to timestamp transactions and employees consensus algorithms to guarantee convergence (it uses a Paxos Replicated State Machine).

This is a very interesting read because it's a real-life, practical and production ready database that implements all that complex logic.

Custom conflict resolution logic

As the most appropriate way of resolving a conflict may depend on the application, most multi-leader replication tools let you write conflict resolution logic using application code. That code may be executed on write or on read:

On write

As soon as the database system detects a conflict in the log of replicated changes, it calls the conflict handler. This handler typically cannot prompt a user—it runs in a background process and it must execute quickly.

On read

When a conflict is detected, all the conflicting writes are stored. The next time the data is read, these multiple versions of the data are returned to the application. The application may prompt the user or automatically resolve the conflict, and write the result back to the database. CouchDB works this way.

Automatic Conflict Resolution

There has been some interesting research into automatically resolving conflicts caused by concurrent data modifications. A few lines of research are worth mentioning:

- Conflict-free replicated datatypes (CRDTs) are a family of data structures for sets, maps, ordered lists, counters, etc. that can be concurrently edited by multiple users, and which automatically resolve conflicts in sensible ways. You can read more about this in Distributed Systems for Fun and Profit.

- Mergeable persistent data structures track history explicitly, similarly to the Git version control system, and use a three-way merge function (whereas CRDTs use two-way merges).

- Operational transformation is the conflict resolution algorithm behind collaborative editing applications such as Google Docs. It was designed particularly for concurrent editing of an ordered list of items, such as the list of characters that constitute a text document.

Multi-Leader Replication Topologies

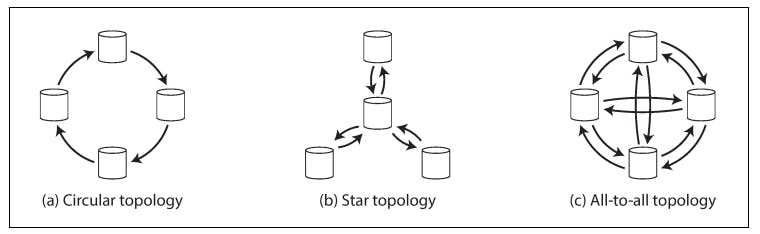

A replication topology describes the communication paths along which writes are propagated from one node to another. If you have two leaders, there is only one plausible topology: leader 1 must send all of its writes to leader 2, and vice versa. With more than two leaders, various different topologies are possible.

The most general topology is all-to-all, in which every leader sends its writes to every other leader. However, more restricted topologies are also used: for example, MySQL by default supports only a circular topology, in which each node receives writes from one node and forwards those writes (plus any writes of its own) to one other node. Another popular topology has the shape of a star: in which one designated root node forwards writes to all of the other nodes. The star topology can be generalized to a tree.

In circular and star topologies, a write may need to pass through several nodes before it reaches all replicas. Therefore, nodes need to forward data changes they receive from other nodes. To prevent infinite replication loops, each node is given a unique identifier, and in the replication log, each write is tagged with the identifiers of all the nodes it has passed through. When a node receives a data change that is tagged with its own identifier, that data change is ignored, because the node knows that it has already been processed.

A problem with circular and star topologies is that if just one node fails, it can interrupt the flow of replication messages between other nodes, causing them to be unable to communicate until the node is fixed. Meanwhile, the fault tolerance of a more densely connected topology (such as all-to-all) is better because it allows messages to travel along different paths, avoiding a single point of failure.

Summary

In this article, we discussed a different paradigm in replication in which we have multiple leaders in the replicated system, which is called Multi-Leader Replication. We explored the use cases that require multi-leader replication such as, replication over multiple datacenters, offline client applications and collaborative editing applications. Then we discussed the main problems that arise from multi-leader replication, mainly, writes conflicts and go through some of the approaches that can be used for conflict resolution such as, synchronous conflict resolution, conflict avoidance, convergent and custom conflict resolution and some approaches for automatic conflict resolution such as conflict-free replicated datatypes (CRDTs) (2-way merge), mergeable persistent data structure (3-way merge) and operational transformation. Then finally, we went over some of the famous topologies for multi-leader replication such as, circular, star topologies and even more dense topologies like all-to-all.

In the next part of this chapter, we will discuss leaderless replication.